- I — The Stealth Operation

- II — Public Skeptic, Private Builder

- III — What It Actually Does

- IV — The Technical Reality

- V — The Architecture

- VI — The Patent Portfolio

- VII — IP Strength and Vulnerabilities

- VIII — Netflix's Broader AI Strategy

- IX — The Competitive Landscape

- X — The Labor Question

- XI — Who Is Locked Out

- XII — The Consumer Wildcard

- XIII — What Netflix Actually Bought

- XIV — What Comes Next

On March 5, 2026, Netflix announced the acquisition of InterPositive, LLC — a sixteen-person company with no public product launch, no content library, and no disclosed commercial business. Bloomberg subsequently reported that total consideration could reach $600 million, including earnout. If accurate, it ranks among Netflix's largest acquisitions in company history, and among the most significant AI-related transactions by any major studio.

The deal is best understood not as a talent acquisition or a content deal. It is a strategic purchase of a new production-and-post infrastructure layer. The core question is not simply why Netflix bought InterPositive. It is which part of the media supply chain Netflix believed it could now internalize — and which part every other studio can no longer access.

This analysis proceeds in four parts. The acquisition and industrial logic behind it. The architecture implied by the patent filings. An assessment of the patent portfolio itself — what is granted, what is merely published or pending, where the claim set appears strongest, and where vulnerability exists. And the strategic consequences for Netflix, competing studios, post houses, and technology vendors.

In 2022, while publicly dismissing generative AI on podcasts and at investment conferences, Ben Affleck quietly incorporated Fin Bone, LLC in Los Angeles and began assembling a sixteen-person team of engineers, researchers, and creatives. The company was backed by RedBird Capital Partners, the investment firm led by Gerry Cardinale. It operated in near-total obscurity for almost four years. No press releases, no demo reels, no venture funding announcements. When Netflix acquired the company in early 2026 — by then renamed InterPositive, LLC — the industry learned about it from a Netflix blog post, not a press tour.

Bloomberg reported that Netflix did not disclose the financial terms of the acquisition; it was Bloomberg's reporting that produced the $600 million ceiling figure, including performance-based earnout. Bloomberg also reported that after working on the technology for several years, Affleck began soliciting investment in 2025, meeting with venture capital firms and Hollywood companies — conversations that led to Netflix evaluating InterPositive as an in-house production tool rather than a commercial platform.

The transaction followed Netflix's decision to walk away from its months-long pursuit of Warner Bros. Discovery. In this context, the InterPositive acquisition represents a markedly different strategic move: rather than massive horizontal consolidation, Netflix chose a targeted investment in proprietary AI filmmaking capability that it can build upon internally.

Netflix confirmed the acquisition through its technology blog on March 5, 2026. All sixteen InterPositive team members would join Netflix. Affleck would serve as a senior adviser. The technology, Netflix made clear, would be offered to its creative partners but would not be sold commercially in the marketplace.

The timeline of Affleck's public statements about AI, read alongside the InterPositive patent filing dates, reveals a deliberate pattern. At CNBC's Delivering Alpha summit in 2024, Affleck told the audience that AI "cannot write you Shakespeare" and that "nothing new is created" by large language models. These were not throwaway comments — they were a precise articulation of the boundary his company was designed to operate within.

On the Joe Rogan Experience in January 2026, weeks before the acquisition became public, Affleck elaborated: it is "bullshit" to think AI can produce meaningful movies from scratch. But he then drew the distinction that defines InterPositive's entire product thesis.

"If you can shoot a scene in a studio and then make it realistically look like the North Pole using AI instead of actually going to the North Pole, that saves money, saves time, and lets you focus on the performances."

That is not a man who dislikes AI. That is a man who spent four years building a very specific version of it. In the acquisition announcement, Affleck described his initial encounter with generative AI video tools: the outputs initially alarmed him, but he recognized quickly that the technology in its generic form lacked filmmaking knowledge — "There was this really deep engineering, math, science level of expertise associated with creating this — but no artistic, no filmmaking information whatsoever." That gap became InterPositive's founding premise.

The public skepticism served a second function. By consistently framing generative AI as creatively inadequate, Affleck was implicitly validating InterPositive's approach — tools that enhance existing footage rather than replace the filmmaking process. Every interview was, in effect, a market-positioning statement for a product no one knew existed.

InterPositive's system begins where traditional production ends: with the dailies. Raw footage from a soundstage — every take, every angle, every lighting setup — becomes the training dataset for a production-specific model. This is not a model trained on the open internet. It knows only what was shot on that set, by that crew, for that project.

"It's not about text-prompting or generating something from nothing. You're building a model from your own material. That's how this works. You have to create your movie essentially first before you can really build your model around your movie using AI."

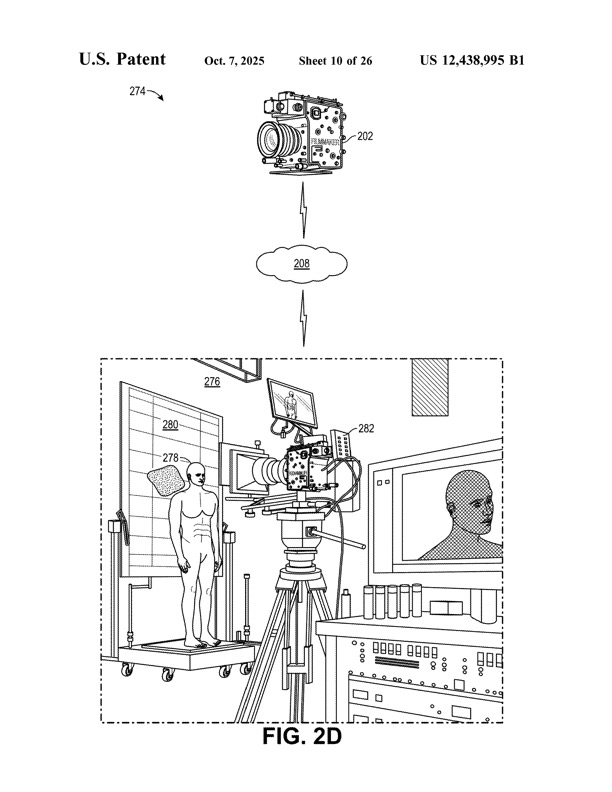

The technical architecture rests on two proprietary components. The captioner model, internally designated SamildAnach, converts visual frames into structured text descriptions using cinematographic vocabulary: shot type, focal length, aperture, lighting ratios, camera movement. The generation model, designated Filmmaker, produces new frames conditioned on those descriptions plus the original footage. The two operate in a closed loop: SamildAnach evaluates Filmmaker's output, and Filmmaker refines based on that evaluation. This feedback cycle — protected across multiple patent filings — is what maintains visual consistency across generated frames.

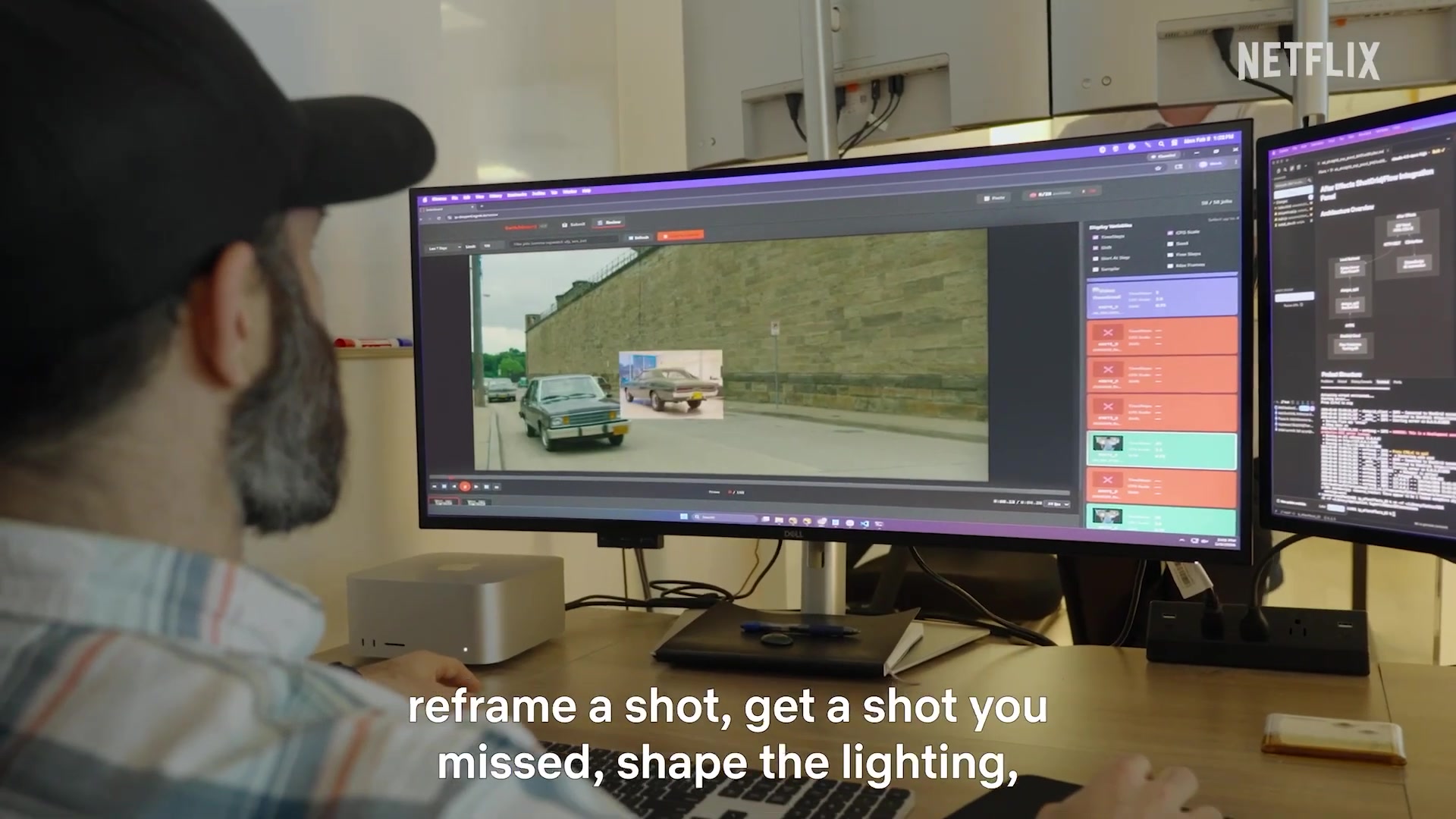

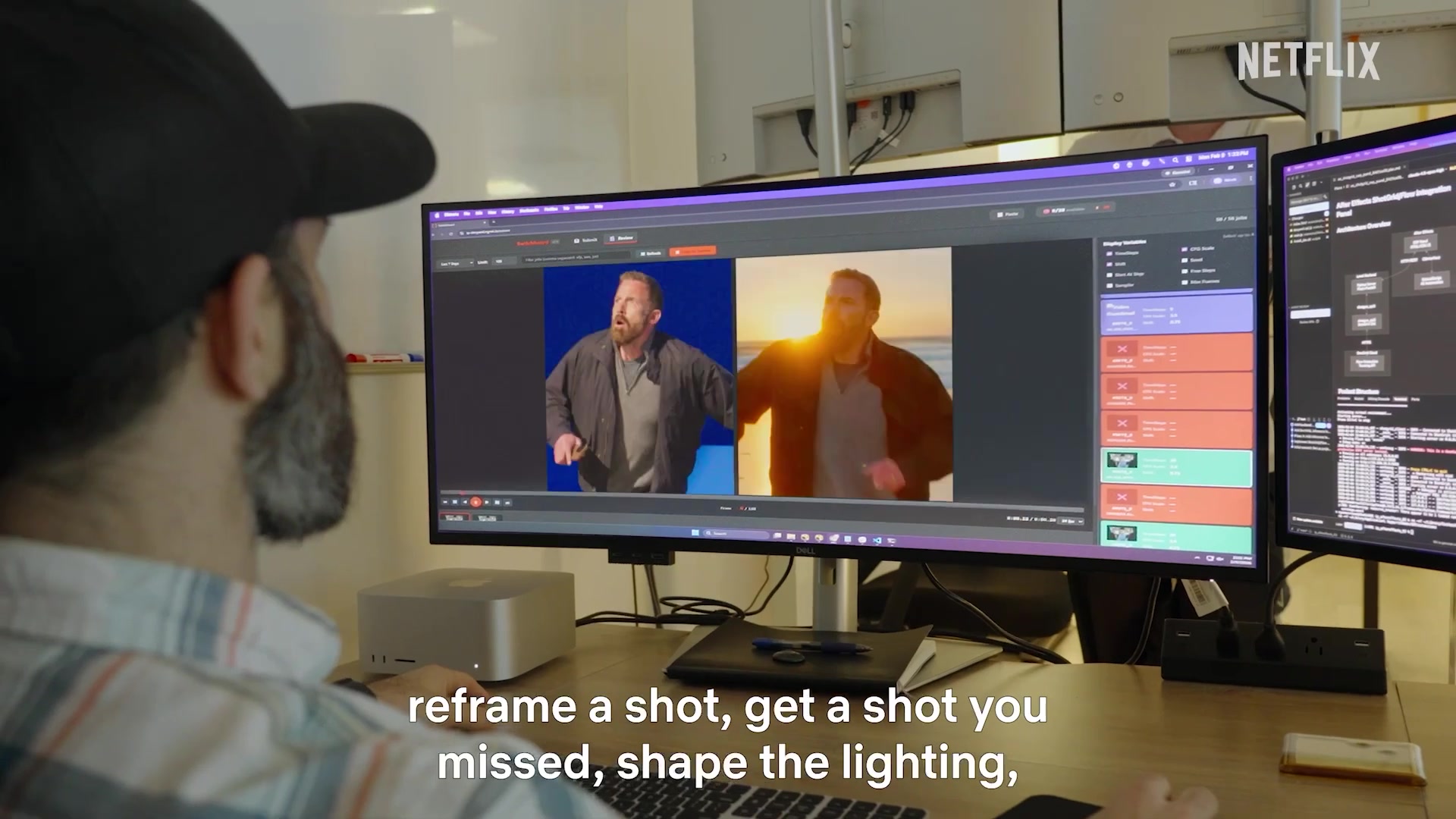

The practical applications, as Affleck described them: removing wires on stunts, reframing a shot, generating coverage that was never physically captured, shaping the lighting, enhancing backgrounds. The tools focus exclusively on filmmaking technique — never on performances. Affleck draws this line sharply: the system is trained on the character the actor has already built, and it cannot and does not impose anything alien onto it.

The synthetic coverage capability warrants particular attention. Because the model has internalized how a specific scene was shot, lit, and composed, it can produce additional angles and framings that were never physically captured. This is extrapolation from a locked dataset of real material, not generation from nothing. An editorial department can cut with these synthetic shots as though they originated from an actual camera. That capability does not merely compress post-production schedules. It potentially reduces the volume of coverage a production needs to physically capture in the first place.

What Netflix acquired is not a breakthrough in machine learning. Frame interpolation, view synthesis, neural radiance fields, Gaussian splatting, inpainting-based reframing, and diffusion model fine-tuning all exist as published research and, in some cases, as commercial products. The individual ML components are implementations of known techniques.

The technology is commodity. The value is the pipeline that connects it — the security architecture, the production-ready interface, the locked-dataset training methodology, and the embedded audit trail. Netflix did not acquire a platform. It acquired a team that knew how to wire open-source ML infrastructure to existing professional media tools, train production-specific models within them, and deliver the output in a form the existing post-production pipeline could ingest.

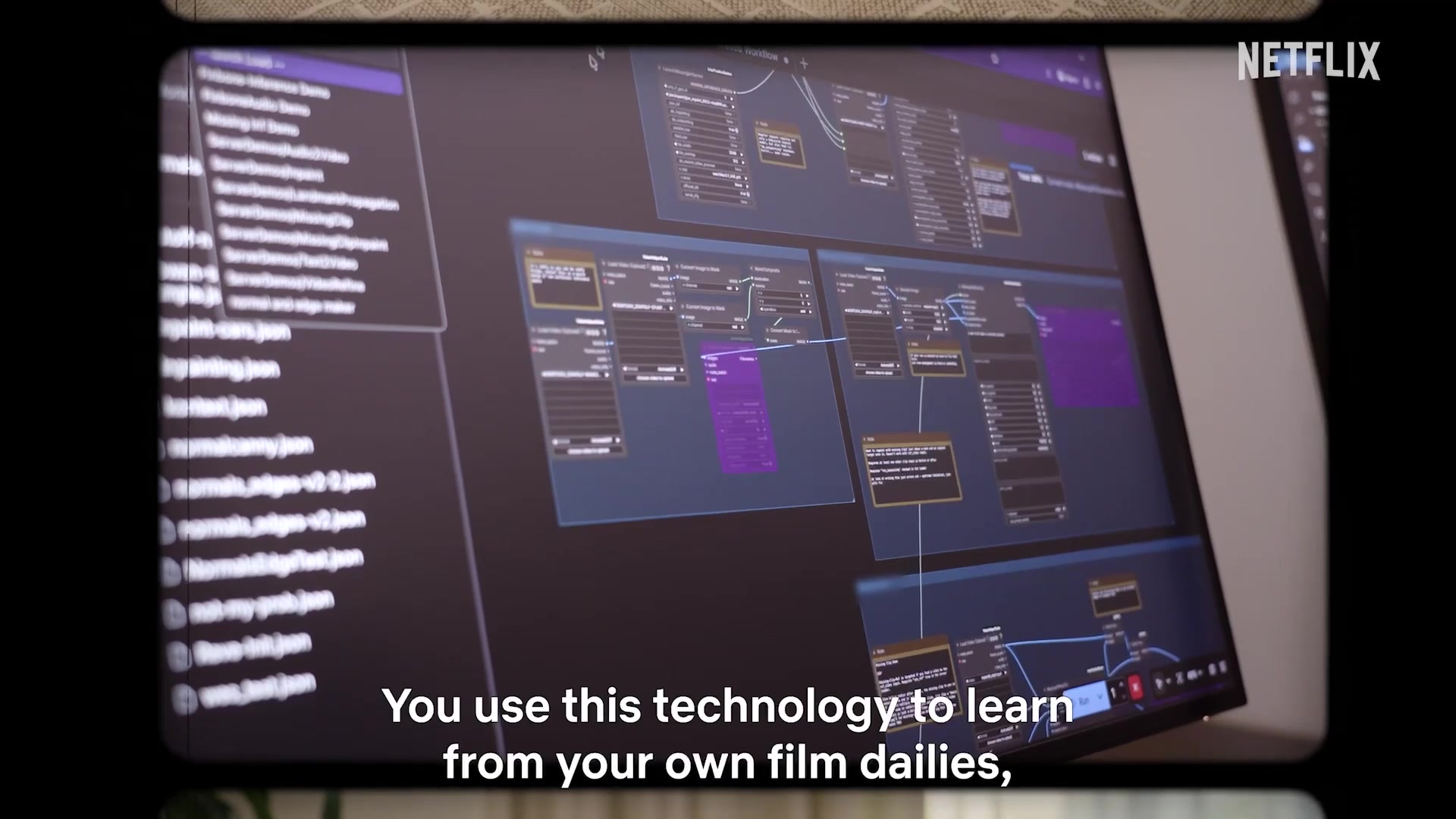

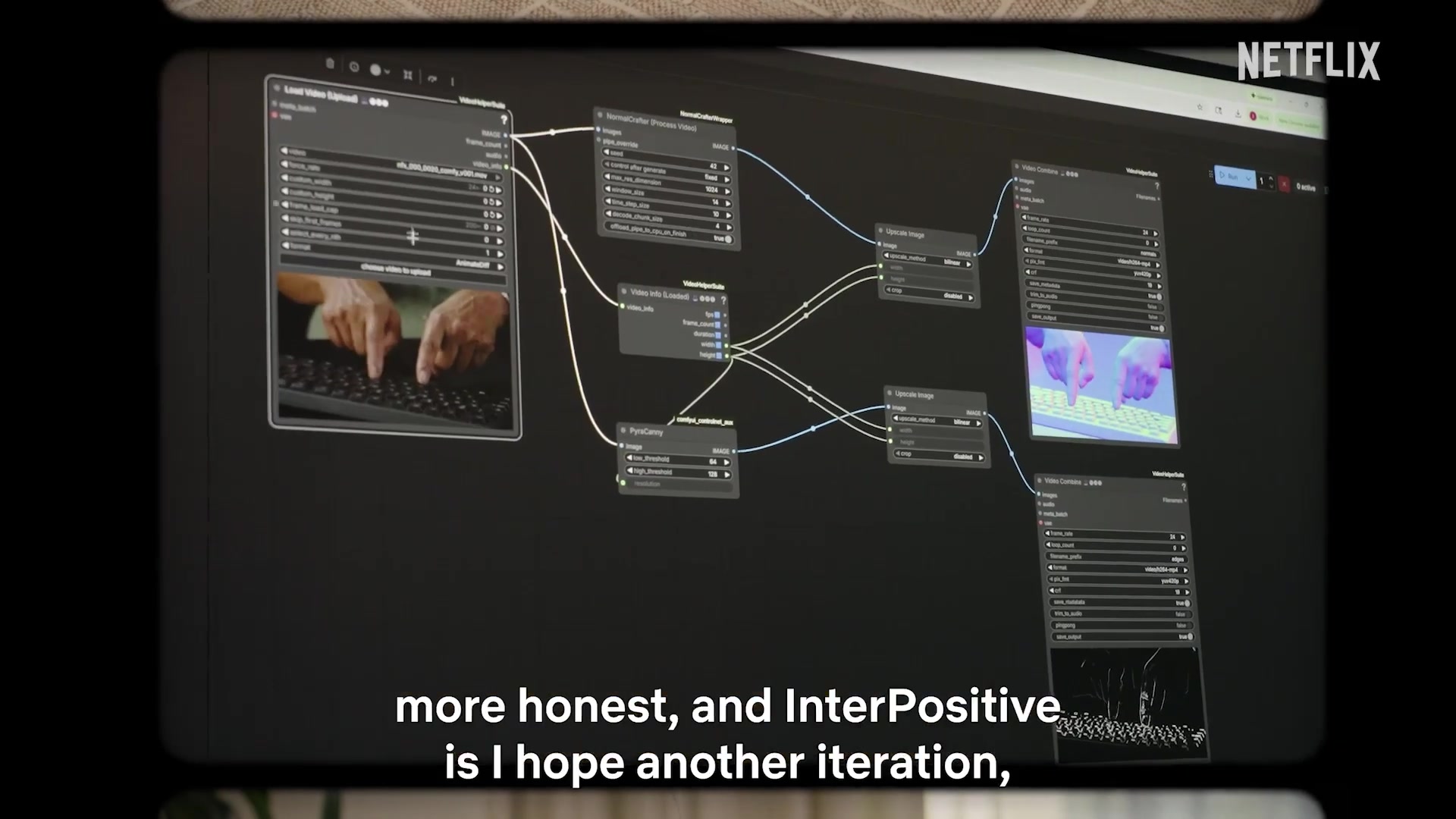

The announcement video shows two distinct interface layers. One sequence shows a node-based engineering environment — connected processing blocks, a graph-based workflow layout — consistent with tools like ComfyUI or similar ML pipeline frameworks. This appears to be the backend infrastructure, the layer where engineers configure inference workflows, not the surface a post supervisor or DIT interacts with.

The production-facing layer is something else entirely: a bespoke browser-based application running in Chromium, with a purple-themed job queue, shot preview player, and batch processing controls. A second monitor visible in the same sequences shows After Effects and ShotFlow integration documentation — confirming direct pipeline connections to standard post-production delivery infrastructure. At one point the job counter reads 50 of 50 shots processed, consistent with a real dailies batch workload. InterPositive did not hand colorists and editors a node graph. They built a purpose-built review-and-dispatch interface that speaks the language of existing finishing workflows, with the AI machinery abstracted below it.

Three elements appear to distinguish this from academic implementations. The dataset is locked: models train only on authorized production footage, eliminating the copyright exposure that plagues open-internet-trained systems. The pipeline embeds provenance tracking: the patent specifications describe metadata identifying source footage, model versions, and processing steps at the frame level. And the color science layer — preserving exposure, color temperature, and dynamic range consistency across original and generated frames — reflects production expertise that pure ML teams rarely possess.

The InterPositive system is architected as a seven-layer stack, where each layer is protected by one or more patent filings and each is dependent on the layers beneath it. A competitor cannot replicate just one layer — they must build or acquire the entire stack.

| Layer | Function | Patent Coverage |

|---|---|---|

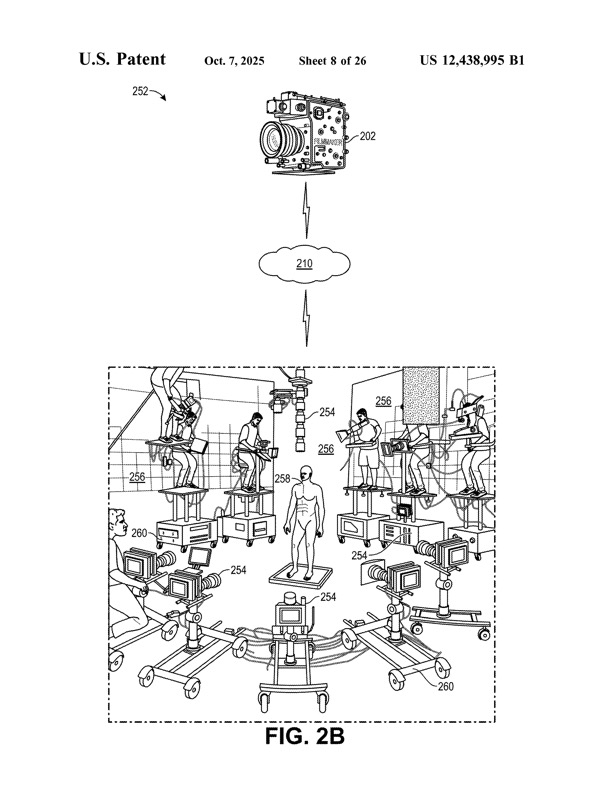

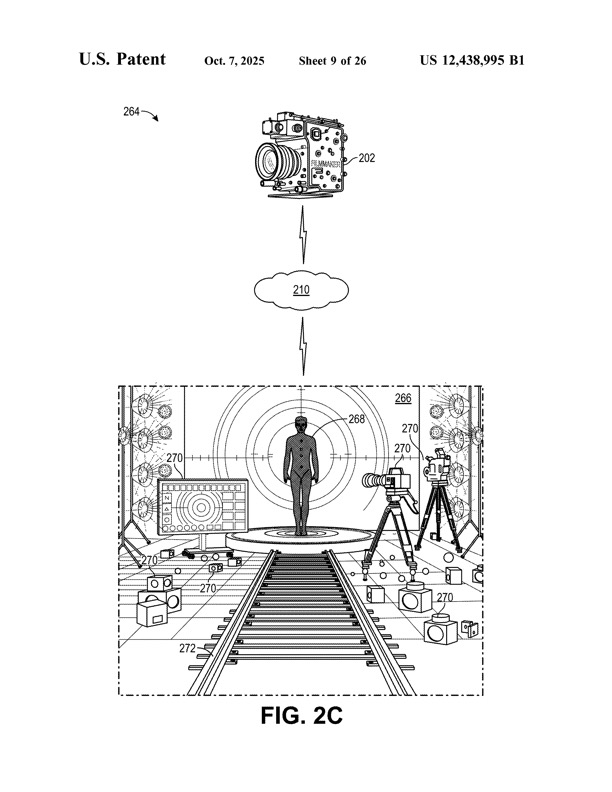

| 1 — Spatial Capture (LiDAR) | LiDAR sensors, laser locators, and robotic equipment (Techno crane, Panther Dolly, KUKA arm) capture X, Y, Z coordinates plus pitch, yaw, roll at frame level. Numerical tokenization encodes spatial data as precise values rather than text descriptions. | US 12,322,036 B1, WO2025255446A1 |

| 2 — Dataset Construction | Cinematic data collection combining LiDAR, video frames, and filmmaking metadata into structured training datasets. Multi-modal asset integration across video, audio, script, stills, storyboards. | WO2025255436A1, WO2025255425A1 |

| 3 — Synthetic Data & Simulation | Virtual simulation environment using Unreal Engine with accurate lens models and virtual lighting rigs. Simulates distortion, aberration, vignetting, and focus breathing to match real-world cinematographic optics, enabling training data at scales impossible with physical production alone. Includes simulation of professional filmmaking techniques. | WO2025255427A1, WO2025255426A1, WO2025255439A1, WO2025255441A1 |

| 4 — Model Training | Single-parameter variation methodology: one visual attribute altered per training iteration while all others remain fixed. Multi-task loss combining photometric, perceptual, and temporal consistency losses. | WO2025255428A1 |

| 5 — Captioner (SamildAnach) | Converts video frames into structured cinematographic metadata: shot type, focal length, aperture, lighting ratios, camera movement. Enables language-model control of video generation. | US 12,511,904 B1 |

| 6 — Generator (Filmmaker) | Style-controlled video generation conditioned on SamildAnach's metadata output. Closed-loop feedback with the captioner maintains visual consistency across generated frames. WO2025255432A1 covers quality-control and professional-standards enforcement within the generation pipeline. | US 12,511,837 B1, WO2025255429A1, WO2025255432A1 |

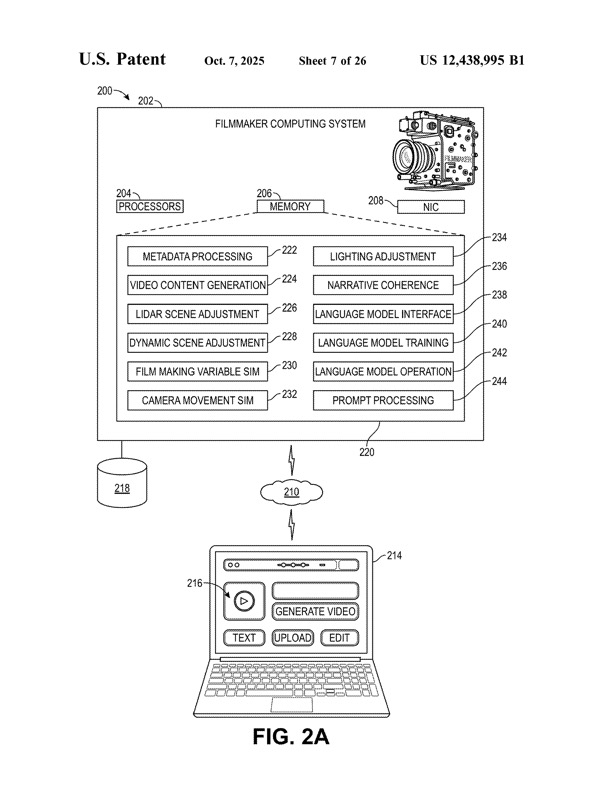

| 7 — Interface (VLM Layer) | Natural language interface enabling filmmakers to describe intent in production vocabulary; VLM translates to machine-readable parameters. Consumer extensions in development. | US 12,438,995 B1, WO2025255437A1, WO2025255433A1 |

The foundation layer deserves particular emphasis. LiDAR-based spatial capture is the layer every other layer in the stack depends upon. Equipment cited in the specifications includes Techno cranes, Panther Dollies, and KUKA robotic arms for sub-centimeter positioning accuracy. Without the spatial understanding enabled by this layer, the entire system collapses into conventional pixel-based AI video generation. Designing around LiDAR-based spatial understanding while maintaining system coherence is the most difficult design-around challenge in the entire portfolio.

The training pipeline is also substantially broader than the public narrative suggests. A production generates a rich, interconnected set of assets — dailies, production audio, script, shot lists, continuity notes, stills, lookbooks, VFX breakdowns, storyboards — all of which can be embedded into a single unified vector space through multimodal embedding. Applied to production assets, this means the model does not merely perceive what footage looks like. It comprehends the relationship between scripted intent, the director's coverage plan, the DP's lighting choices, and the actual captured frames.

As of March 2026, the public patent-family record supports four granted U.S. patents, twelve published PCT applications, and one provisional priority filing. The USPTO granted three patents between October and December 2025 — US 12,438,995 B1, US 12,511,837 B1, and US 12,511,904 B1 — and a fourth, US 12,322,036 B1, in June 2025, all assigned to Fin Bone LLC or InterPositive, LLC. Twelve PCT applications were published through WIPO in December 2025, all sharing a June 7, 2024 priority date.

These numbers require careful interpretation. Not all patent filings are equal, and treating all public filings as equivalent grants would misrepresent the portfolio's actual enforceability.

| Category | Count | Enforceable Now? | Certainty |

|---|---|---|---|

| Granted US Patents | 4 | Yes — immediately | High (examined; presumption of validity) |

| PCT Publications (WIPO) | 12 | No — pending national phase examination | Low to moderate (unexamined; claims may be narrowed or rejected) |

| Provisional Application | 1 | No (priority date only; did not itself mature into a patent) | Subject matter carried forward into later U.S. and PCT family members |

The twelve published PCT applications are not granted patents. They are published applications that must enter national phase examination in individual countries — EPO, JPO, CNIPA, and others. National examiners may narrow or reject claims based on different prior art searches and different eligibility standards. The EPO applies a stricter standard for inventive step and excludes "computer programs as such" from patentability, creating additional hurdles for AI-related claims. These twelve filings should be understood as claims to future protection, not existing protection.

The provisional application (US 63/657,756) did not itself mature into a patent. However, the public record supports that its subject matter was carried forward into multiple November 25, 2024 U.S. non-provisional filings and June 6, 2025 PCT applications, all claiming the same June 7, 2024 priority date. Three of the four granted U.S. patents — US 12,438,995 B1, US 12,511,837 B1, and US 12,322,036 B1 — sit in that priority chain.

The practical implication: the portfolio's legal enforceability, as of March 2026, rests on four granted US patents. The remaining filings represent varying degrees of potential future protection. Investors, competitors, and facilities should read the portfolio with this structure clearly in mind.

Strengths

The portfolio's primary strength is its coordination. Sixteen public filings cover the complete pipeline from spatial capture through consumer applications. Each patent addresses a critical component, and together they form a system substantially harder to design around than any individual patent. The claims cover particular methodologies — soundstage protocol, numerical tokenization, multi-task loss, single-parameter variation — not vague AI concepts. Specific method claims are harder to design around than abstract functional claims. Four filings are already granted and enforceable, carrying a presumption of validity under US law.

The data moat deserves separate emphasis. Even if every patent were invalidated tomorrow, the proprietary training datasets — purpose-built on controlled soundstages over four years and continuously expanded by every Netflix production — would remain impossible to replicate without equivalent capital investment and years of development. The patents protect the methods. The data makes the methods work. These are distinct assets, and both reinforce the other.

Vulnerabilities

The underlying machine learning techniques are not novel. Transformers, attention mechanisms, loss functions, diffusion models — all are commodity. A competitor could use the same underlying architectures with different integration approaches. Individual layers carry design-around risk: monocular depth estimation instead of LiDAR; a different metadata schema; a different training protocol instead of single-parameter variation. Each substitution degrades the system somewhat but does not eliminate the capability entirely.

The prior art landscape is also substantial. Style transfer, video captioning, LiDAR-assisted scene understanding, and single-parameter training all have extensive bodies of published research. The four granted US patents survived initial examination, but inter partes review proceedings at the PTAB have invalidated claims in approximately 60–70% of instituted reviews. The four granted US patents have not been tested in this arena.

The portfolio is strong because of its coordination. Designing around any single patent is possible. Designing around the entire coordinated system is substantially more difficult and would likely result in a materially different technology. The real defensive depth comes from the combination of patent protection, proprietary data, specialized talent, and production integration — not from any single element in isolation.

The InterPositive acquisition does not exist in isolation. Netflix has been assembling a vertically integrated AI production stack through a series of moves that, taken together, suggest a systematic strategy to control every layer of the content pipeline.

Eyeline Studios, Netflix's in-house visual effects division built on the Scanline VFX acquisition, handles heavy visual effects — the kind of work traditionally outsourced to facilities like Industrial Light & Magic or Weta. InterPositive occupies a different position in the pipeline, handling the on-set and near-set augmentation that sits between principal photography and heavy post-production. These are not competing tools. They are adjacent layers in a vertical stack Netflix now controls end-to-end.

Netflix has already begun integrating AI tools into active productions. In early 2025, El Eternauta used AI-assisted visual effects for a Buenos Aires building-collapse sequence — an early proof of concept for embedding these tools inside a live production pipeline. But the project the industry will judge the technology by is David Fincher's The Adventures of Cliff Booth, a Netflix original starring Brad Pitt, written by Quentin Tarantino, and shot by Erik Messerschmidt. Bloomberg has reported that Fincher used InterPositive's tools on the film, and if that work is visible in the finished product, it will establish a benchmark no patent filing can match. Fincher's post-production process is famously granular: freelance colorist Eric Weidt, who has graded every Fincher project since 2015, builds a custom HDR pipeline from dailies through final grade on FilmLight's Baselight, working in a desaturated, high-contrast palette with preserved shadow detail — dual timelines separating creative decisions from technical corrections, iterating over weeks or months while Fincher sends pixel-level notes remotely. Any AI system that touches those frames has to slot into that chain without disrupting it. Automated wire removal, synthetic coverage, and AI-driven relighting either survive the scrutiny of a director who requests triple-digit takes and a colorist whose Baselight sessions are measured in months, or they don't. Few other directors' endorsements would carry the same weight.

The pattern is consistent: rather than licensing external tools, Netflix builds or acquires proprietary technology and keeps it exclusive. Every production capability Netflix owns internally is a capability it does not pay a vendor markup on — and a capability competitors cannot access.

InterPositive is not the only company applying AI to film production, but its positioning is distinct — and the competitive picture is more nuanced than a simple comparison of feature sets suggests.

Flawless AI, founded by director Scott Mann, occupies a complementary position that deserves direct comparison. Flawless operates on the performance layer — its TrueSync technology modifies actors' lip movements and facial expressions for dubbing and localization; its DeepEditor allows editors to reshape on-screen dialogue, fix timing issues, and transfer expressions between takes. Flawless works on the human element of the frame. InterPositive explicitly avoids it. The distinction is clean: one handles the performance layer, the other handles the technical layer, and neither attempts to generate content from nothing. The critical market difference is structural: Flawless licenses its technology to any studio that wants it. InterPositive does not — it now belongs exclusively to one streamer.

At the tool layer, AI-powered capabilities already ship in widely used software. DaVinci Resolve Studio includes Relight FX for AI-driven relighting, Magic Mask for subject isolation, and UltraNR for neural noise reduction. These are real, shipping tools that working editors and colorists use today. The prompt-to-video tools — Sora, Runway, Luma, Pika, Kling — operate in a fundamentally different space: they train on internet-scale datasets and generate video from text prompts. InterPositive assumes filming has already occurred and enhances it. Comparisons between InterPositive and these tools, while common in press coverage, are largely misplaced.

The InterPositive acquisition landed during a period of acute tension between Hollywood's creative workforce and the studios over AI. The SAG-AFTRA negotiations of 2023 produced contract language specifically addressing AI-generated performances, and the below-the-line unions have been watching the technology closely.

InterPositive's pitch is that AI handles the "logistical difficult technical stuff," freeing directors to focus on the performances. That framing positions the technology as efficiency-enhancing rather than labor-displacing. But the craft roles InterPositive's tools can reduce — location crews, additional camera setups, certain VFX passes — represent real employment for real workers. Affleck acknowledged the economics bluntly on the Joe Rogan podcast: AI could replace offshore rendering work and redirect those budget savings toward actors and core creative elements. That is a direct statement about labor displacement, however it is framed.

Netflix's stated position is that the acquisition is "really not about cheaper — it's really about better." Whether this distinction holds as the technology matures and its cost-saving potential becomes clearer will depend less on the technology itself and more on how aggressively it is deployed, and whether the savings are shared with the workforce or captured entirely as margin improvement. What will determine that outcome is not intent but leverage — and leverage, in this case, begins with what the patents actually protect.

Netflix has gone proprietary. The entire sixteen-person team, the trained models, the custom workflows, and the production-integration methodology are inside Netflix's walls. No other studio, streamer, or production company can access them.

Every major competitor — Apple, Amazon/MGM, Disney, Paramount, Sony — faces the same production cost pressures, the same union documentation requirements, and the same need to automate foundational post-production tasks without replacing human creative judgment. None of them have InterPositive. None of them can license it.

What they need is an equivalent pipeline that ingests the full multimodal production dataset; builds a per-production model trained exclusively on that production's own material; automates the foundational pass across dailies grading, editorial, VFX cleanup, and conform; produces a clean, embedded provenance audit trail with every deliverable; and runs on infrastructure they control. This system does not currently exist as a product in the market.

The post-production industry already runs on tools that handle similar media-layer operations. Several already have plugin architectures or node-based processing graphs that could host ML inference as just another node in the chain. The individual components are on the shelf. The assembly instructions are what is missing — and what is now protected.

WO2025255433A1 deserves separate strategic consideration because its commercial implications extend far beyond professional filmmaking. The consumer tools patent covers AI-based filmmaking tools for consumer use — capturing scenes in various formats and applying processing techniques to affect visual outcomes, including film stock simulation and film processing techniques.

Netflix has 283 million subscribers as of early 2026. Potential applications include a Netflix mobile feature letting subscribers apply cinematic looks to personal videos using authentic film stock emulations; a Netflix Creator program offering simplified AI filmmaking tools to independent filmmakers; or integration with Netflix's existing content pipeline for user-generated content.

The film stock simulation capability is technically distinct from conventional filter applications. Rather than applying a color grade overlay after the fact, the patent describes AI-powered reproduction of the actual photochemical characteristics of specific emulsions — color rendition, contrast curves, grain structure, highlight/shadow behavior of particular film stocks. Whether the claims as filed are specific enough to survive examination and distinguish themselves from existing film simulation products would require analysis of the actual claim language. This application has not been granted anywhere. Its commercial potential is real but speculative.

Netflix acquired six things:

-

1

Exclusive TechnologyInterPositive's tools will not be sold, licensed, or made available to any other studio. Every competitor will need to build or buy their own system from scratch.

-

2

Patent Protection Through 2045The earliest patents in the portfolio won't expire for nearly two decades. Competitors face either designing around the claims or negotiating licenses — neither of which Netflix is obligated to grant. Four US patents are granted and enforceable now: US 12,322,036 B1, US 12,438,995 B1, US 12,511,837 B1, and US 12,511,904 B1.

-

3

Proprietary Training DataPurpose-built datasets captured on controlled soundstages over four years of development. This data cannot be replicated without equivalent capital investment and years of development. Critically, every Netflix production now generates additional training data — the models improve continuously as more production material enters the system.

-

4

A Specialized TeamSixteen people with deep expertise in both AI engineering and professional filmmaking — a combination that takes years to develop and is extremely rare. InterPositive has a proven capability to attract, organize, and deploy talented engineers and filmmakers with world-class results in a compressed timeframe.

-

5

A Production-Ready SystemInterPositive's technology is not a research prototype. The pipeline — including a bespoke browser-based review and dispatch application integrating directly with After Effects and ShotFlow — is designed for use by post-production professionals. Netflix can deploy it on actual productions immediately, without major additional capital expenditure to integrate with existing workflows.

-

6

The Consumer Tools PatentWO2025255433A1 opens the door for subscriber-facing features — a potential competitive advantage in the streaming wars that extends beyond professional production entirely.

Based on the patent portfolio and the strategic trajectory of the acquisition, several developments seem likely. Real-time on-set AI assistance — extending the system from post to on-set use, allowing directors and cinematographers to preview AI-enhanced footage during principal photography. Automated dailies processing at scale across Netflix's entire production slate. Custom models per production, training against a show's existing footage so the AI learns the specific visual language of that particular project.

Extended patent filings are also probable. The captioner patent (US 12,511,904) is currently US-only; international filings seem likely. Additional patents covering new capabilities developed by the team at Netflix are expected. Continuation applications from the existing patents could further extend coverage.

The Fincher validation will be closely watched. If the technology performs at the level Fincher demands — and Fincher is known for technical precision — it will establish a benchmark that other productions and competitors will measure against. That proof point matters more than any patent filing for adoption in premium production.

The InterPositive portfolio is the most comprehensive intellectual property position in AI-assisted filmmaking assembled to date. Netflix's acquisition delivers an exclusive, production-ready system with no near-term competitive equivalent. The patent protection extends through 2045. The proprietary training data cannot be replicated. The specialized team is embedded within Netflix's production infrastructure.

But the analysis must be honest about what the portfolio is and is not. It is a strong, coordinated IP position built on specific technical implementations and anchored by four granted US patents. It is not an impregnable fortress. The underlying ML techniques are commodity. Individual layers can be designed around. The PCT applications are unexamined. The prior art landscape includes substantial published work in every domain the patents touch. The June 7, 2024 provisional did not itself become a patent, but the public record shows that its subject matter was carried forward into later U.S. and PCT family members; as of March 2026, the enforceable U.S. core is four granted patents, not three. The consumer tools opportunity remains speculative.

The strategic value lies not in any single patent or innovation, but in the systematic coordination of the entire pipeline combined with the proprietary data, specialized talent, and production integration that Netflix now controls. Each element reinforces the others. The patents protect the methods. The data makes the methods work. The team knows how to connect the methods to real production workflows.

For everyone else in the industry, the acquisition is an urgent signal. The individual components needed to build an equivalent system are available. The integration expertise is scarce. Whoever assembles it first — or acquires a team capable of doing so — captures the competitive advantage that InterPositive can no longer provide to the rest of the market.

The parts are on the shelf. The assembly instructions are now protected for the next twenty years.

Read the full 60-page analysis — deeper on the patents, the architecture, and the strategic implications